The Strategic Reality for Real Estate Firms and MLSs

AI agents are not coming. They are already inside your business. The firms and MLSs that authorized them in 2024 and early 2025 made a capability decision. They now need to make a governance decision.

The organizations that define these guardrails proactively will operate with confidence. They will be able to scale agent deployment because they have a framework that scales with it. They will be able to answer regulator questions, respond to client concerns, and contain incidents when they occur.

The organizations that do not will eventually face an incident they cannot explain, an email they did not send, a disclosure they did not authorize, a data exposure they did not anticipate, and discover in that moment that they have no log, no scope document, no vendor accountability, and no recovery protocol.

The industry spent 2024 debating whether to adopt AI.

It spent early 2025 writing employee policies.

The conversation that will define 2026 is harder: what happens when the AI you authorized stops waiting for instructions and starts making decisions?

It’s our belief that every real estate firm, MLS, and technology company has connected an AI agent to live business data and you might not even know it. People are configuring AI as an extension of themselves, sharing login information to document, email, and other individual software accounts. This is a mistake. AI needs to be treated as a separate user across all of your systems.

The Authorization Moment Is the Governance Moment

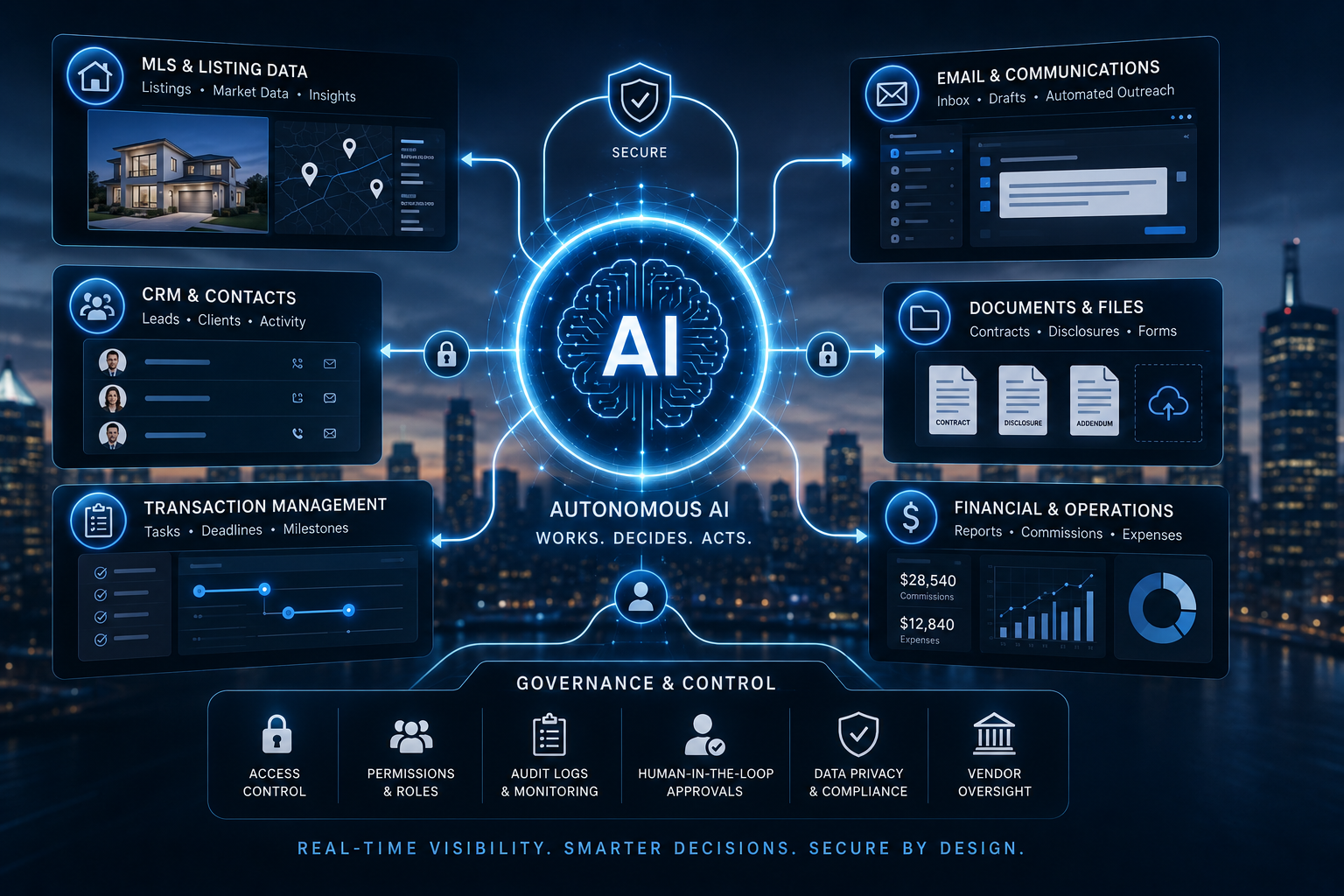

When a broker, MLS executive, or technology administrator grants an AI agent access to their systems, including documents, email, contacts, listings, operating procedures, organizational charts, and financial data, they have crossed a threshold that most organizations do not fully understand.

That moment of authorization is not the end of a decision. It is the beginning of a relationship with a new type of user that is consequential.

The AI is now an authorized user in the business. It reads. It reasons. It acts. And unlike a new employee who asks clarifying questions, an AI agent does not hesitate when the path forward is ambiguous. It works on accomplishing the task, regardless of the method. AI innovates, finds work arounds, and makes up answers if necessary to complete the job.

The question is not whether your AI agent should be shut off or those that gave the authorization should be admonished. The question is whether your organization has defined the boundaries of what it is permitted to do, and whether your vendor has done the same.

The Four Exposure Layers Every Real Estate Firm Must Address

Layer 1: Data Access Scope

Most AI agents are granted full access. An agent authorized to draft listing descriptions does not need financial records. An agent managing transaction timelines does not need full email access. The principle of least privilege, giving the agent only the access required for its specific function, is standard cybersecurity doctrine. It is almost never applied to AI agent deployments in real estate.

An AI agent is an autonomous, goal-oriented system that manages complex, multi-step workflows, while an AI skill is a specific, reusable function (like a “recipe” or tool) that an agent calls to execute a precise task. Agents are “who” acts, and skills are “how” they do it.

Layer 2: Task Autonomy and Decision Boundaries

Modern AI agents work through the problem independently, selecting tools, making inferences, and taking sequential actions. This is the capability that makes them powerful. It is also what makes undefined boundaries dangerous.

An agent directed to “follow up with leads who have not responded” might send emails you did not draft, at intervals you did not set, with language you did not approve. An agent directed to “manage the transaction file” might request documents from parties, flag compliance issues to regulators, or reclassify records in ways that alter the legal file.

The question for you: what decisions require human review, and what actions it is explicitly prohibited from taking?

Layer 3: Memory, Training, and Data Contamination

When you train an AI agent on organizational documents, financial records, and operating procedures, (which is exactly how you should roll out AI) it does not simply reference that material. It internalizes it. The agent begins to generate answers that reflect your proprietary strategy, your pricing logic, your compensation structures, and your competitive intelligence, available to anyone who queries it with sufficient access.

When Seven Gables rolled out the Zillow Preview partnership, they loaded everything into a Zillow Services Help Bot. It’s all knowing, and has been trained to only give answers that come specifically from written instructions it has been trained on. The Bot gives the answer and links to the reference. More importantly, the company is able to see all of the Q&A and fine tune the AI agent.

Do you know where the data you used to train or configure your AI agents is stored, who can access it, whether it is used to improve the vendor’s general models, and what happens to it when you terminate the contract?

Layer 4: Vendor Guardrails, or the Absence of Them

This is the layer that most organizations assume someone else has handled. They have not.

AI vendors operate on a spectrum. At one end are platforms with documented agent governance frameworks, including rate limits, action logs, human-in-the-loop checkpoints, role-based permission architectures, and contractual data use restrictions. At the other end are tools that ship agents with maximum autonomy and minimal controls, because that is what makes the demo impressive.

The real estate industry has no current standard for evaluating vendor AI governance. There is no RESO data standard for agent behavior. There is no NAR policy requirement for vendor guardrail disclosure. There is no MLS rule requiring AI vendors serving your business to document their control architecture.

That vacuum is the current state of the industry.

The Guardrail Architecture Every Organization Needs

These are the governance components that should be in place before any AI agent is granted access to live business systems. Action Logging. Scope Certificates. Human-in-the-Loop Checkpoints.

Define in advance which categories of agent action require human review before execution. Communications to clients or regulators. Financial transactions above a defined threshold. Any action that modifies a legal document or a listing record. These checkpoints are not inefficient. They are the difference between a supervised agent and an autonomous actor operating inside your fiduciary obligations.

Vendor Guardrail Disclosure. Before authorizing any AI agent to access business systems, require the vendor to provide written answers to four questions: What actions can this agent take without human confirmation? How are agent actions logged and retrievable? Is data used to train or improve your general models? What are the data handling terms if we terminate? Vendors who cannot answer these questions have not built a governable product. Is the vendor insured for incidents caused by faulty AI? Are you listed as an additional insured?

Exit and Revocation Protocol. You need a documented procedure for revoking an agent’s access, immediately, completely, and verifiably, if something goes wrong or the vendor relationship ends. Most organizations have no revocation protocol. They have a login they can disable. That is not the same thing.

The AI you authorized is already operating inside your business. The question is whether your organization is governing it.