The National Association of REALTORS MLS Issues and Policy Committee made a bold move to require RESO data dictionary complainant by Jan 1, 2016, and RESO RESTful web API by June 30, 2016. Further complicating the matters is the groundswell of considerations with third party portals making requests for MLS direct feeds. Data sharing between MLS systems, RETS Update, and system conversions taking place across the industry today deepen the data transport considerations. Wrapping it all together with a lit match could easily lead to mass hysteria.

WAV Group took a look at what the largest MLSs in the nation are doing and we came upon a similarity that is worth noting. Large MLSs do not always represent best practices, but more often than not they do. Along with size comes complexity and a wider group of data management needs.

Flexible Data Feed Configurations By Vendor

One of the most significant problems facing MLSs when it comes to data management is managing variations of data feeds. A straight IDX feed can be configured in a pretty standard way. But feeds to other MLS systems, VOW Feeds, Broker Feeds, feeds to publishers with broker opt in or agent-opt in, or special other feeds become untenable. Most MLSs just bail out on variations and simply send all of the data with an agreement that says: “only use what is permitted.” This is a disaster waiting to happen and the worst business practice with data management. A friend gave me the analogy that sending all of the data but telling someone they can only use a portion of it is like depositing all of your money into someone else’s bank account and telling them they cannot use it. Moreover, sending all of the data when only a portion is necessary requires additional data feed bandwidth even if the vendor is throwing away the parts they don’t need. Most MLSs already use 3TB to 4 TB of data per month. Big markets use 10TB to 12TB per month.

To solve this problem many of the large MLSs like the Houston Association of REALTORS provision a feed according to the exact data license agreement and only send the licensed data. They can even get fancy and turn watermarking on or off by data feed or any number of other interesting and flexible configurations.

Becoming MLS Vendor Agnostic

There are two styles of storing and managing MLS data. Most MLSs in America store it in the Vendor’s database. There are some advantages to operating the MLS application with the integrated vendor database until such time as you want to switch MLS systems. At that point, the complexity of a system conversion becomes more challenging. Data from one system does not necessarily fit nicely into another system. MLSs like CRMLS, MRIS, NorthstarMLS and others operate their own database separately from the MLS vendor system. This strategy has lots of advantages which include being vendor agnostic. You can run multiple MLS applications (front end systems) on top of the same database.

If you are using a separate RETS server, against your own database, none of the vendors connected to the RETS server have any disturbance when the MLS system changes. When you use the vendor’s server, like the MLS system itself, all of the vendors need to map to the new server for a data feed. This is highly disruptive to the productivity applications provided by technology companies to your agent and broker subscribers.

Reporting and Trouble Shooting

When you have hundreds of data feeds connecting every day, they inevitably break for a variety of reasons. Enterprise IDX vendors report that markets like MLSPIN or MyFloridaRegionalMLS can tell them exactly when the error occurred and what the error was. This means that the problem can be solved in minutes or hours rather than days or weeks.

When you have hundreds of data feeds connecting every day, they inevitably break for a variety of reasons. Enterprise IDX vendors report that markets like MLSPIN or MyFloridaRegionalMLS can tell them exactly when the error occurred and what the error was. This means that the problem can be solved in minutes or hours rather than days or weeks.

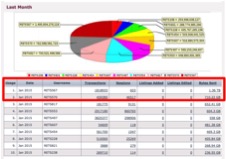

Moreover, MLSs can see what vendors are consuming the most data. For example, some vendors are downloading the entire data set multiple times per day every day rather than pulling the incremental updates. With bandwidth reporting by vendor, MLSs can quickly and easily spot these amateur practices and educate the vendor on how they can improve their process. In the screenshot to the left, two vendors are each absorbing a TB of data each per month when the other top 10 vendors are only using a little more than 500GB. It makes you wonder if they are sharing their RETS credentials with another company or just managing data poorly.

Moreover, MLSs can see what vendors are consuming the most data. For example, some vendors are downloading the entire data set multiple times per day every day rather than pulling the incremental updates. With bandwidth reporting by vendor, MLSs can quickly and easily spot these amateur practices and educate the vendor on how they can improve their process. In the screenshot to the left, two vendors are each absorbing a TB of data each per month when the other top 10 vendors are only using a little more than 500GB. It makes you wonder if they are sharing their RETS credentials with another company or just managing data poorly.

RETS Syndication

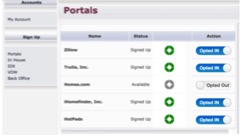

Until the past couple of years, MLSs relied on applications like Listhub and Point2 for listing syndication. These services ingest the MLS data and offer brokers the ability to manage their preferences. Zillow and Trulia are requesting direct feeds from the MLS like Realtor.com. But this is hard. Now a new demand is being placed on the RETS server to manage broker opt-in or even opt-in on a listing-by-listing basis.

There are a number of ways that this can be managed.RETS server solutions like SolutionStar’s REDataVault™, CoreLogic’s RETS Pro™, and Bridge Interactive’s MLS Direct Syndication™ that do provide different variations of a broker or MLS dashboard for managing the opt-in/out preferences. Some of these solutions even handle the RETS agreements and one has a billing system. Core systems from other MLS vendors that come with the MLS system offer yet another set of solutions.

There are a number of ways that this can be managed.RETS server solutions like SolutionStar’s REDataVault™, CoreLogic’s RETS Pro™, and Bridge Interactive’s MLS Direct Syndication™ that do provide different variations of a broker or MLS dashboard for managing the opt-in/out preferences. Some of these solutions even handle the RETS agreements and one has a billing system. Core systems from other MLS vendors that come with the MLS system offer yet another set of solutions.

Everything mentioned in this article is the tip of an iceberg. RETS servers are becoming more and more complex every day and that trend seems poised for rapid expansion as data management and data licensing expands. WAV Group is working with a number of MLSs on developing a roadmap for RETS. If you need some help. Please contact us.

Many thanks to Bridge Interactive for allowing me to take some screenshots of their solution to illustrate the perspectives discussed in this article.